Outmatic

Why Outmatic

The engineering partner for the AI era.

Real context first, then the right technology, not the other way around.

Read our storyOutmatic

We built and open-sourced a .NET implementation of TurboQuant, a near-optimal vector quantization algorithm. When it makes sense, when it doesn't, and what we learned working through the trade-offs with a real client.

Outmatic

Engineering Team

When working with semantic search at scale, sooner or later the same problem shows up: memory. Not the model's memory, but the vectors it produces.

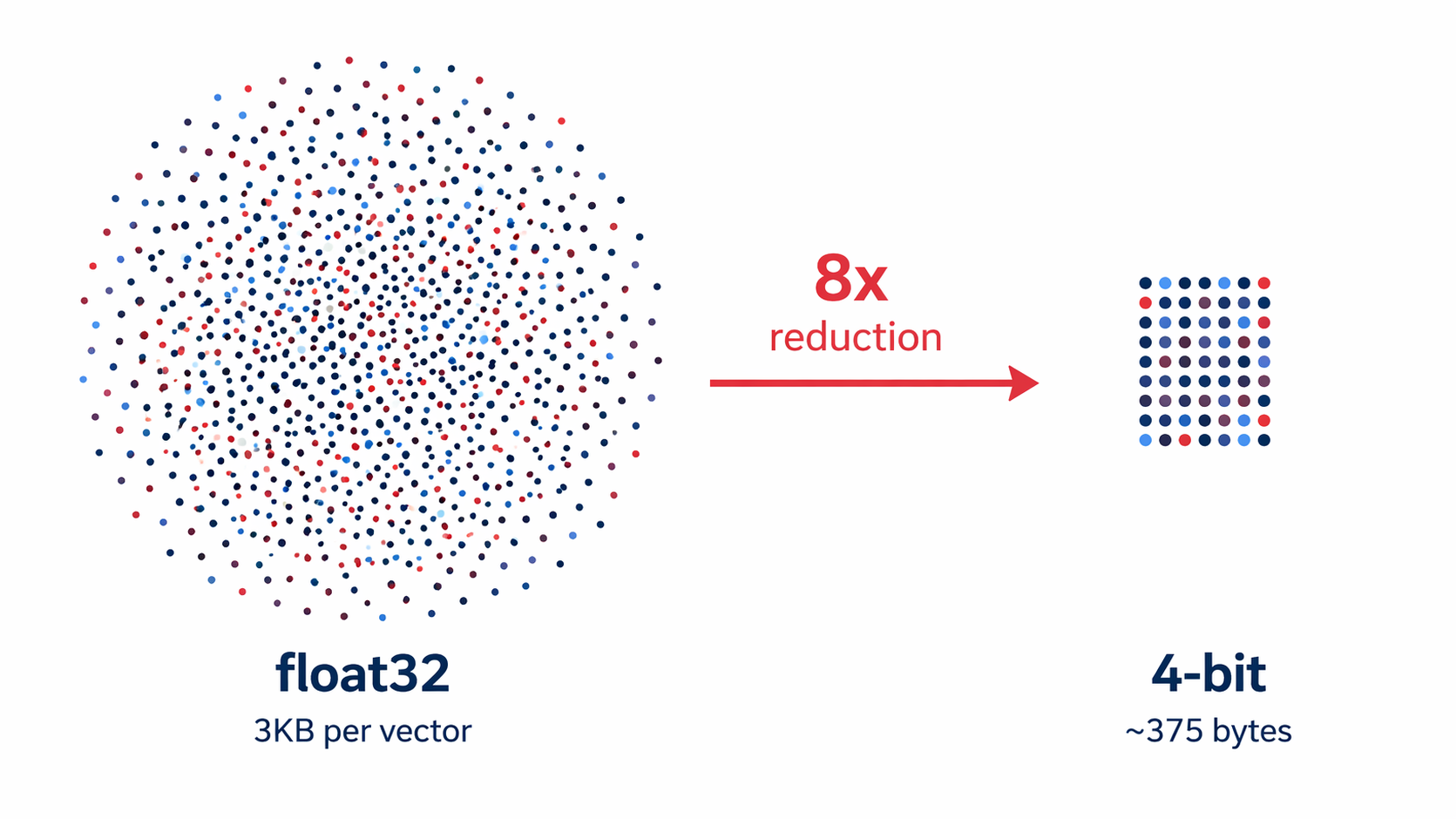

A 768-dimension embedding in float32 takes about 3KB. Multiply that by a million documents and you're looking at 3GB just for the vector index. At tens of millions, infrastructure costs start outweighing the model itself.

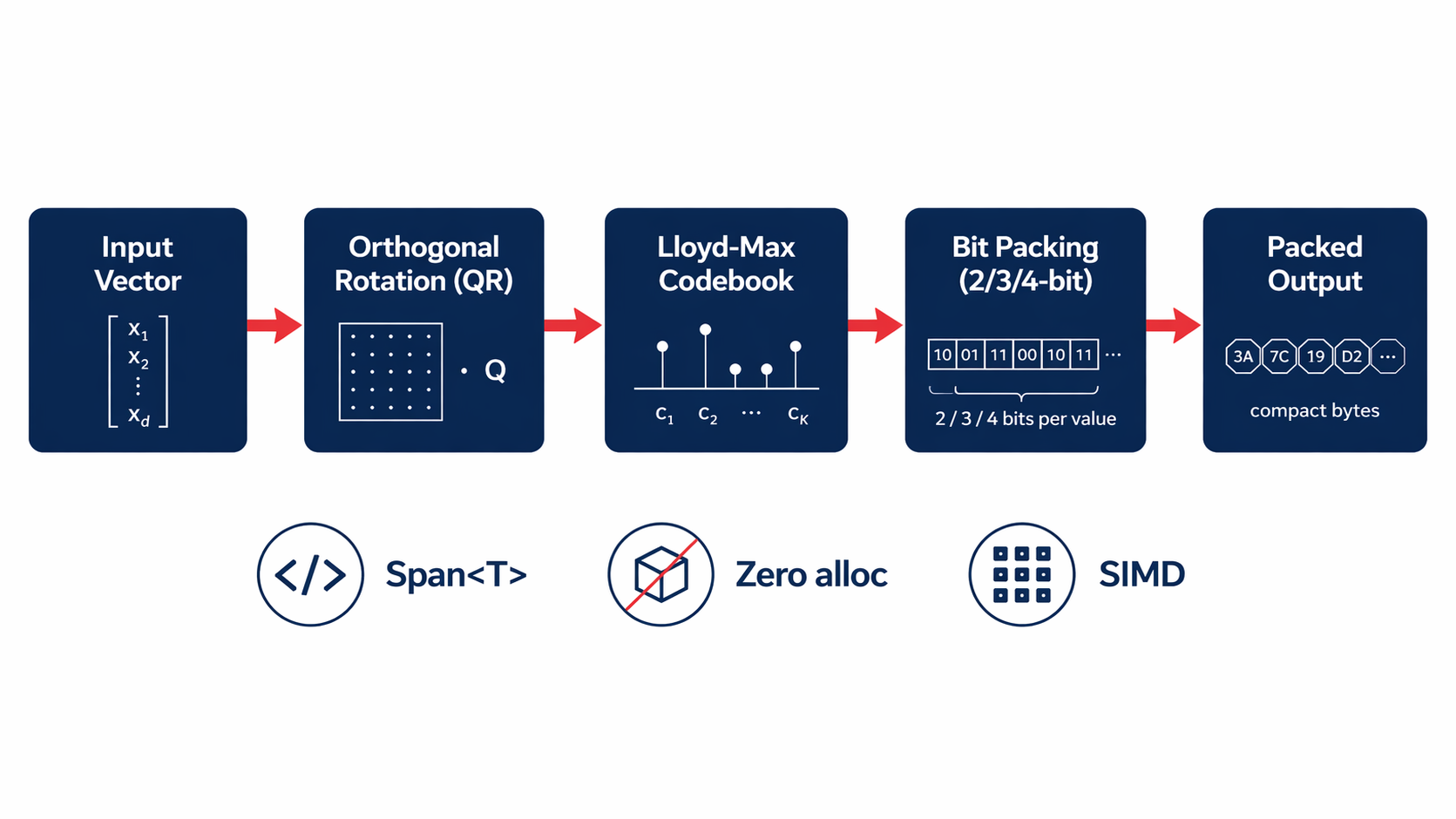

In April 2025, researchers from Google Research, NYU, and Google DeepMind published a paper introducing TurboQuant, later presented at ICLR 2026. The core idea isn't new: reduce the numerical precision of vectors to save memory. What's new is that TurboQuant gets close to the information-theoretic lower bound, with zero calibration data and no metadata overhead.

In practice: 4-bit instead of float32, near-identical quality on modern embedding models, 8x memory reduction.

The ecosystem responded quickly with implementations in Python, Rust, and C. Unfortunately in .NET, nothing.

Working with a client using Vespa as a search engine with E5-768 embeddings, we asked ourselves whether it was worth bringing TurboQuant to C#. The short answer: it depends.

For that specific case, no. E5 models show measurable quality loss with aggressive quantization, and Vespa natively supports bfloat16, which halves memory with zero additional complexity. One line in the schema, no new code.

But the question led us to think about when TurboQuant actually makes sense in a .NET architecture:

It makes sense when you keep a vector index in-process: feature stores, semantic caches, batch deduplication over tens of millions of documents. In these scenarios there's no Vespa or external vector store handling compression for you.

It doesn't make sense when a system like Vespa already manages persistence and search. It adds complexity with no tangible benefit.

There was no C# implementation of TurboQuant. For anyone working with .NET at vector scale, that meant either bridging to Python (introducing latency and operational complexity) or giving up on the technique entirely.

We built and open-sourced TurboQuant for .NET: a paper-correct implementation of Algorithm 1, published on NuGet.

What's inside:

Span<T>

We validated the implementation against the paper's theoretical bounds. Dmse matches the Lloyd-Max optimal distortion within 1%, and cosine similarity stays above 0.995 at 4-bit across all tested dimensions. The test suite covers 125 cases including paper validation, end-to-end scenarios, thread safety, and robustness edge cases.

The most useful takeaway isn't technical. It's methodological: before implementing an optimization technique, it's worth understanding whether the problem it solves is your actual problem.

In our client's case, the answer was native bfloat16: available, free, already in the system. TurboQuant would have been the right answer to a slightly different question.

This kind of reasoning, starting from real context before choosing the technology, is what we try to bring to every project.